This AI tool could analyze a photo of your face to predict the biological age of cancer patients. (Chayanit/Shutterstock)

In a nutshell

- Cancer patients tend to “look” biologically older than their actual age, and this visual age difference, captured by an AI system called FaceAge, can strongly predict their survival odds across multiple cancer types.

- FaceAge outperformed doctors’ estimates and existing clinical tools when it came to forecasting life expectancy in terminally ill patients, offering a more objective way to guide treatment decisions.

- The AI’s age predictions were linked to real biological aging markers, including a significant genetic association with CDK6, a gene involved in cellular senescence, suggesting the tool may be measuring more than just surface-level appearance.

BOSTON — What if a simple photo of your face could reveal how long you might live? Scientists have developed an artificial intelligence system called FaceAge that can estimate a person’s “biological age” from facial photographs, potentially revolutionizing how doctors make life-or-death treatment decisions for cancer patients.

The international study, published in The Lancet Digital Health, found that cancer patients typically “look” about five years older than their actual chronological age. Those who appeared significantly older faced worse survival prospects, regardless of their actual age, cancer type, or other medical factors.

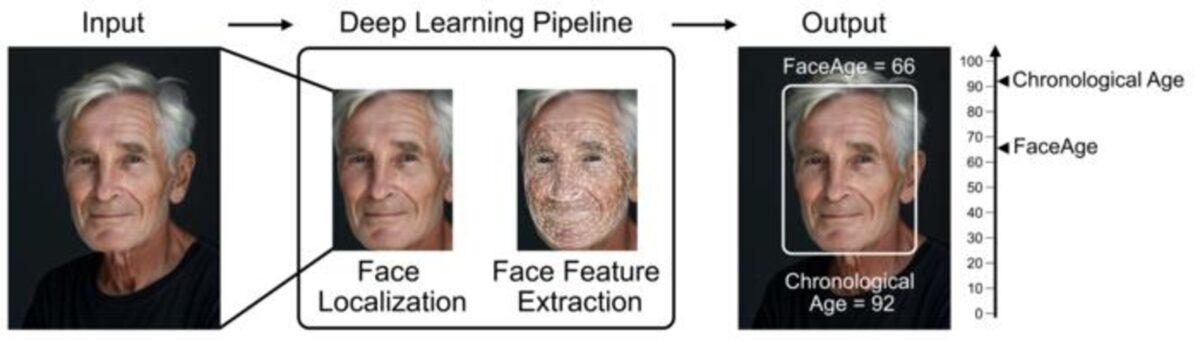

The research team explains that their AI model enhances cancer survival predictions by analyzing facial photographs to determine biological age based on visible features. This work is a collaboration between teams from Harvard Medical School and Maastricht University in the Netherlands.

Doctors often rely on subjective impressions when deciding if elderly or frail cancer patients can withstand aggressive treatments. FaceAge could provide objective data to support these critical choices, potentially saving lives by identifying who might benefit most from different treatment approaches.

How Your Face Reveals Your “True” Age

We all know people who look significantly younger or older than their actual age. This isn’t just cosmetic; it reflects how quickly a person is aging biologically due to genetics, lifestyle factors like smoking, and disease processes.

The research team developed FaceAge by training a deep learning system on nearly 60,000 face photographs from publicly available databases. The AI analyzes facial features that might indicate biological aging processes not captured by chronological age alone.

After training the system, researchers tested it on over 6,000 cancer patients from the Netherlands and the United States, comparing FaceAge estimates with actual survival outcomes. The findings showed that patients whose FaceAge was significantly older than their chronological age consistently had worse survival rates across multiple cancer types.

Current smokers looked significantly older (by about 33 months on average) than former smokers or those who never smoked. Patients’ body mass index (BMI) showed a surprisingly minimal relationship with FaceAge estimates.

Perhaps most compelling was FaceAge’s performance when predicting survival in patients with terminal cancer. In these cases, making accurate prognostic estimates is crucial for deciding whether to pursue aggressive treatments or focus on comfort care.

The Genetic Connection

The researchers also investigated whether FaceAge might reflect underlying molecular aging processes. They analyzed genetic data from 146 lung cancer patients, focusing on genes associated with cellular senescence, the biological process through which cells stop dividing as they age.

The AI’s predictions were linked to a gene called CDK6, which is linked to cellular aging. Past research has shown that CDK6 can slow down the aging process in cells. In this study, people who looked older according to the AI tended to have lower activity in this gene, suggesting the AI might be picking up on real biological signs of aging. However, a person’s actual age didn’t show the same genetic connection.

Medical Decision Making

If this technology becomes mainstream, patients could receive a personalized assessment of how their body might respond to various cancer treatment options within seconds of their doctor taking their photo.

Today, doctors estimate a patient’s physical condition through subjective assessments and standardized but imperfect scales like the Eastern Cooperative Oncology Group (ECOG) performance status. FaceAge could provide a more objective measure, particularly valuable for elderly cancer patients, where the benefits of aggressive treatment must be carefully weighed against risks.

Researchers found that patients with cancer consistently looked older than their actual age across different cancer types, while patients with benign conditions had FaceAge estimates much closer to their chronological age. This suggests the combined effects of cancer and treatment accelerate biological aging processes visible in facial features.

Despite these promising results, significant challenges remain before FaceAge could enter clinical practice. The technology must be extensively validated in diverse populations to ensure it works equally well across different ethnicities, genders, and age groups. The researchers acknowledged potential biases in their training data, which included many photographs of well-known individuals who might have different lifestyle and socioeconomic factors affecting their aging patterns.

Ethical concerns must also be addressed. How would patients feel knowing an algorithm analyzed their face to predict their survival? Could insurance companies misuse such technology for determining coverage? These questions will require careful consideration as the technology develops.

Nevertheless, FaceAge could blend artificial intelligence and medicine to transform cancer technology in ways numbers alone cannot capture. Tools like FaceAge could help ensure patients receive the most appropriate care for their biological, not just chronological, age.

Paper Summary

Methodology

Researchers developed a deep learning system called FaceAge to estimate biological age from face photographs. They trained the system on 56,304 publicly available face images from the IMDb-Wiki dataset and validated it on 2,547 images from the UTKFace dataset. The system uses two neural networks: one to detect and localize faces and another to extract features and estimate age. They then tested FaceAge on three clinical cohorts totaling 6,196 cancer patients from institutions in the Netherlands and USA: the MAASTRO cohort (4,906 patients with various non-metastatic cancers), the Harvard Thoracic cohort (573 patients with thoracic cancers), and the Harvard Palliative cohort (717 patients with metastatic cancer). The researchers used statistical analyses including Kaplan-Meier survival analysis and Cox regression models to assess FaceAge’s prognostic value. They also conducted a survey with 10 medical professionals to compare FaceAge predictions with human performance in predicting six-month survival for 100 palliative care patients.

Results

FaceAge demonstrated significant prognostic value across multiple cancer types and stages. Patients who looked older according to FaceAge had worse survival rates, with each decade increase in FaceAge associated with about 15% higher mortality risk after adjusting for clinical factors. Cancer patients looked approximately 5 years older than their chronological age on average, with current smokers looking significantly older than former or never smokers. When incorporated into clinical prediction models for end-of-life care, FaceAge improved performance compared to using chronological age. Genetic analysis found that FaceAge was significantly associated with CDK6, a gene involved in cellular senescence, while chronological age showed no significant genetic associations. In the survey, physicians’ ability to predict six-month survival improved significantly when they had access to FaceAge predictions in addition to clinical information.

Limitations

The study acknowledges several limitations. The training data may contain biases, as it included many well-known individuals who might have different lifestyle factors, cosmetic alterations, or digital image touch-ups affecting their facial appearance. The model showed some bias across different ethnic groups, though this effect was not substantial. Sample sizes in some subcohorts were small, potentially affecting statistical significance after adjustment for clinical covariates. The researchers note that further validation in larger, more diverse cohorts is needed before clinical implementation, along with optimization to address potential biases related to technical factors, health status, and race.

Funding and Disclosures

The study was funded by the US National Institutes of Health and the European Research Council. Multiple authors disclosed potential conflicts of interest, including Mass General Brigham submitting a patent based on the study results. Some authors reported financial relationships with pharmaceutical companies and other entities outside the submitted work.

Publication Information

The paper titled “FaceAge, a deep learning system to estimate biological age from face photographs to improve prognostication: a model development and validation study” was published in The Lancet Digital Health in 2025. The study was led by researchers from the Artificial Intelligence in Medicine Program at Mass General Brigham and Harvard Medical School, with collaboration from Maastricht University in the Netherlands and other institutions.

I read 30 years ago of a medical practitioners in Asia, that diagnosed people merely from smelling them as they got near. AI has a long way to go.

Cancer causes physical alteration in your whole body,,,,,merely using computer algorythyms to do quickly what people could do is nothing to get excited about.