Certain people can remember a face years after a brief encounter. (Credit: fizkes on Shutterstock)

In A Nutshell

- Super-recognizers don’t just see more; they sample face regions that carry more identity information.

- Researchers rebuilt what each glance sent to the retina, then used nine AI models to test its ID value.

- Their viewing advantage holds even when the total amount of seen information is the same.

- Takeaway: Early “input” differences may help explain why some people excel at recognizing faces.

Some people can spot a familiar face in a crowded airport years after a single meeting, while others struggle to recognize their own neighbors. Scientists have long wondered what separates these “super-recognizers” from the rest of us. Interestingly, research suggests the answer might lie not in their brains, but in their eyes.

Research published in Proceedings of the Royal Society B used artificial intelligence to decode the visual strategies of people with exceptional face recognition abilities. Researchers at the University of New South Wales discovered that super-recognizers don’t just remember faces better. They look at them differently from the moment they first see them, capturing information that’s more valuable for identification.

How AI Measured What Human Eyes Actually See

The breakthrough came from combining eye-tracking technology with deep neural networks trained specifically for facial recognition. By reconstructing exactly what information each person’s eyes captured while learning new faces, scientists could then feed that data to AI systems that measured its computational value for identifying people.

Lead researcher Dr. James Dunn and his team tracked the eye movements of 37 super-recognizers and 68 typical viewers as they studied unfamiliar faces. Super-recognizers qualified for the study by scoring above 1.7 standard deviations on three separate face recognition tests, placing them in an elite category of ability. Using specialized equipment, the researchers recorded where participants looked and for how long, then reconstructed the visual information their retinas actually captured at each glance.

The team used nine different deep neural networks, each trained on massive datasets of faces, to evaluate whether the information sampled by super-recognizers was genuinely more useful for face recognition. These networks determined whether one person’s viewing strategy captured better face identity information than another’s.

It’s Not About Looking Longer—It’s About Looking Smarter

Across all nine neural networks, the results were consistent. Identity matching accuracy improved when using visual information sampled by super-recognizers compared to typical viewers. Even more telling, this advantage held up after researchers controlled for the sheer quantity of information collected.

Super-recognizers weren’t just looking longer or scanning more of the face. They were selectively targeting facial regions with higher diagnostic value—the features that actually matter for telling people apart.

Previous studies have produced conflicting results about whether super-recognizers rely more heavily on specific features like eyes or noses. This inconsistency makes sense given the new findings, since the most diagnostic features likely vary from face to face. Rather than fixating on any single region, super-recognizers appear to distribute their gaze more broadly, perhaps allowing them to discover which features are most useful for each individual face.

The Experimental Setup

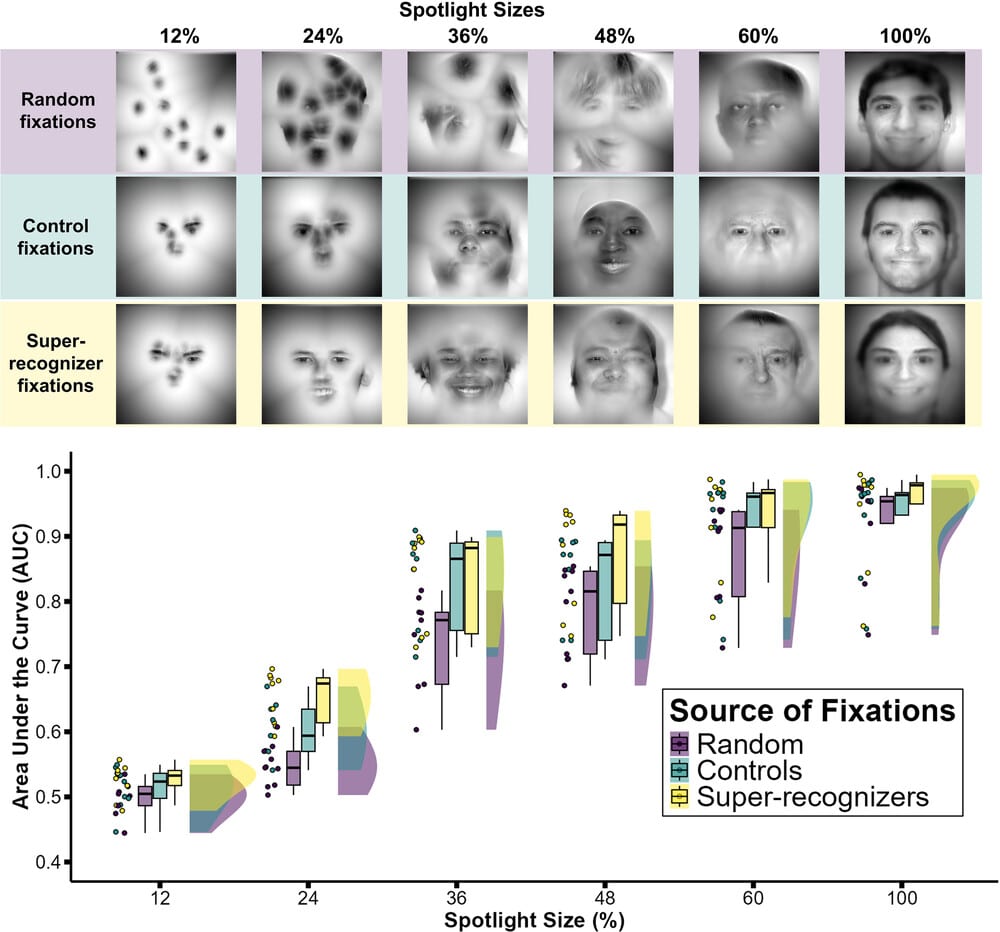

The study involved a specialized experimental setup where participants viewed faces through gaze-controlled “spotlight” apertures that restricted visual information to a region surrounding their point of fixation. These apertures varied in size across trials, revealing anywhere from 12% to 100% of each face. Participants studied new faces during a learning phase, then later tried to recognize whether they’d seen each face before.

Face images came from a database featuring diverse faces varying in gender, age, and ethnicity. Each person appeared in two photographs taken minutes apart—one with a neutral expression and one smiling—to ensure the AI was matching identities rather than just matching identical images.

Born, Not Made

Face recognition ability has a strong genetic basis and separates from other forms of intelligence and object recognition. It resists training and remains stable over time, functioning as a cognitive trait rather than a learned skill. Given the dedicated neural systems that evolved for face identity processing in primate species, understanding what sets super-recognizers apart provides insight into biological visual expertise more broadly.

The research points to differences emerging at the earliest stages of visual processing, right where information hits the retina. Much previous work on face recognition ability has focused on high-level processing—how the brain represents and stores facial information. But these new findings suggest that any representational advantages super-recognizers might have are at least partly driven by differences in what information they collect in the first place.

Paper Summary

Methodology

Researchers recruited 37 super-recognizers (individuals who scored above 1.7 standard deviations on three face recognition tests) and 68 typical viewers. Participants completed a face recognition task while wearing eye-tracking equipment. During the learning phase, they viewed faces through gaze-contingent “spotlight” apertures of varying sizes (12%, 24%, 36%, 48%, 60%, or 100% of the face visible around their fixation point). Researchers recorded where participants looked and reconstructed the retinal information captured at each fixation using mathematical models that simulate how visual acuity declines with distance from the point of gaze. They then evaluated this reconstructed visual information using nine different deep neural networks trained for face recognition, testing whether information sampled by super-recognizers had higher computational value for identity processing than information sampled by typical viewers or random fixation patterns.

Results

Identity matching accuracy across all nine neural networks was higher when using visual information sampled by super-recognizers compared to typical viewers. This advantage persisted even after controlling for the total quantity of information captured, indicating that super-recognizers selectively sample facial features with higher diagnostic value for identification. Super-recognizers also distributed their gaze more broadly across faces compared to typical viewers. Face stimuli came from the Lifespan Database of Adult Facial Stimuli featuring diverse demographics.

Limitations

The study used static images of faces rather than dynamic video, which may not fully capture how people process faces in real-world settings. The gaze-contingent viewing paradigm, while allowing precise control over visual information, differs from natural face viewing conditions. All participants were tested in laboratory conditions rather than applied settings where super-recognizers often work.

Funding and Disclosures

This work was funded by Australian Research Council Discovery Project DP190100957. The authors declared no competing interests and stated they did not use AI-assisted technologies in creating the article.

Publication Information

Dunn, J.D., Varela, V., Popovic, B., Summersby, S., Miellet, S., & White, D. (November 5, 2025). “Super-recognizers sample visual information of superior computational value for facial recognition,” published in Proceedings of the Royal Society B, 292, 20252005. doi:10.1098/rspb.2025.200