(© Zephyrfoto - stock.adobe.com)

READING, England — Artificial intelligence can not only pass college exams but often outperform human students, all while remaining virtually undetected. This eye-opening research, conducted at the University of Reading, serves as a “wake-up call” for the education sector, highlighting the urgent need to address the challenges posed by AI in academic settings.

The study, published in the journal PLOS ONE, aptly described as a real-world “Turing test,” involved secretly submitting AI-generated exam answers alongside those of real students across five undergraduate psychology modules. The results were nothing short of astonishing. A staggering 94% of the AI-written submissions went undetected by examiners, despite being entirely produced by an AI system without any human modification.

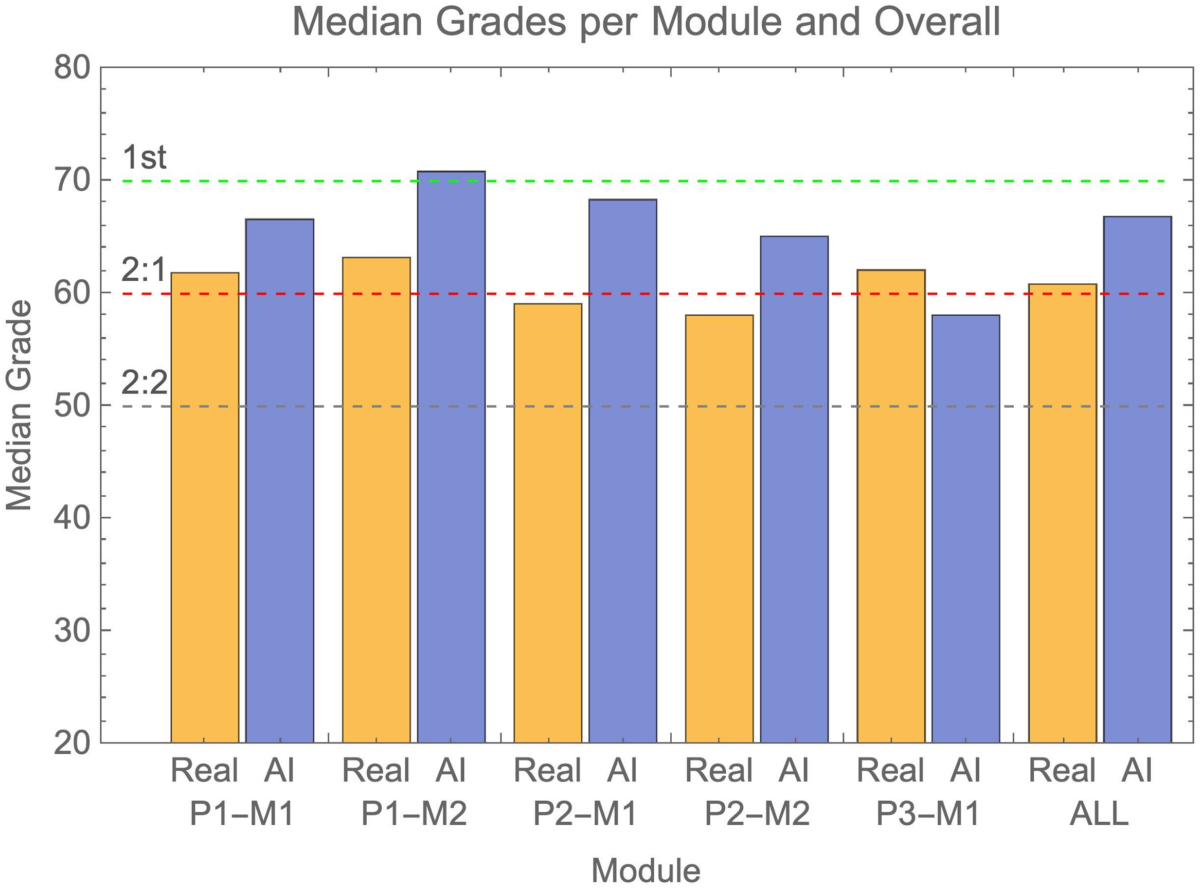

But the surprises didn’t end there. Not only did the AI submissions fly under the radar, but they also consistently outperformed their human counterparts. On average, the AI-generated answers scored half a grade boundary higher than those of real students. In some cases, the AI advantage approached a full grade boundary, with AI submissions achieving first-class honors while human students lagged behind.

This revelation raises profound questions about the future of education and assessment. As AI technologies like ChatGPT become increasingly sophisticated and accessible, how can universities ensure the integrity of their exams and the value of their degrees? The study’s findings suggest that current methods of detecting AI-generated content are woefully inadequate, leaving educational institutions vulnerable to a new form of high-tech cheating.

“Many institutions have moved away from traditional exams to make assessment more inclusive. Our research shows it is of international importance to understand how AI will affect the integrity of educational assessments,” says co-author Peter Scarfe, an associate professor at Reading’s School of Psychology and Clinical Language Sciences, in a statement.

“We won’t necessarily go back fully to hand-written exams, but global education sector will need to evolve in the face of AI,” he continues. “It is testament to the candid academic rigour and commitment to research integrity at Reading that we have turned the microscope on ourselves to lead in this.”

The implications of this research extend far beyond the halls of academia. In a world where AI can seamlessly mimic human intelligence in written assessments, employers may find themselves questioning the reliability of academic credentials. Moreover, the very nature of learning and knowledge acquisition could be called into question. If AI can ace exams without understanding or retaining information, what does this mean for the future of education and professional development?

The researchers behind the study emphasize that their findings should not be seen as an indictment of current educational practices but rather as a call to action. They argue that the education sector must adapt to this new reality, finding ways to harness the power of AI while maintaining the integrity and value of human learning.

“As a sector, we need to agree how we expect students to use and acknowledge the role of AI in their work. The same is true of the wider use of AI in other areas of life to prevent a crisis of trust across society,” adds co-author Etienne Roesch, a professor in Reading’s School of Psychology and Clinical Language Sciences. “Our study highlights the responsibility we have as producers and consumers of information. We need to double down on our commitment to academic and research integrity.”

As we grapple with these challenges, one thing is clear: the landscape of education is changing rapidly, and institutions must evolve to keep pace. The ability to detect and manage AI use in academic settings will likely become a crucial skill for educators and administrators alike. At the same time, there may be opportunities to integrate AI into the learning process in ways that enhance rather than undermine human education.

This alarming study serves as both a warning and an invitation – a chance to reimagine education for the AI age. As we move forward, the goal will be to find a balance that embraces technological advancement while preserving the unique value of human intelligence and creativity.

Paper Summary

Methodology

The researchers conducted their experiment by submitting AI-generated exam answers to five different undergraduate psychology modules at the University of Reading. They used GPT-4, a powerful AI language model, to produce these answers. The AI submissions made up about 5% of the total answers for each module, blending in with the real student submissions. The exams included both short answer questions and longer essay-style responses. Importantly, the researchers did not modify the AI-generated content in any way, simply using the “regenerate” button to create multiple unique answers when needed. The AI submissions were then graded alongside human submissions by regular examiners who were unaware of the experiment.

Results

The study’s key findings were striking. A mere 6% of AI submissions were flagged as suspicious, meaning an overwhelming 94% went completely undetected. Even more remarkably, the AI consistently outperformed human students, with median grades typically falling in the 2:1 or 1st class range.

On average, AI submissions scored half a grade boundary higher than their human counterparts, with some cases approaching a full grade boundary difference. The AI’s performance was strikingly consistent – in four out of five modules, there was nearly a 100% chance that the AI submissions would outperform a random selection of the same number of human submissions.

Limitations

Despite these compelling findings, the researchers acknowledge several limitations to their study. The experiment was conducted at a single university within one discipline, potentially limiting its broader applicability. Only one AI model (GPT-4) was used, and given the rapid evolution of AI capabilities, results might vary with different systems. Additionally, the researchers didn’t attempt to mimic real-world cheating scenarios where students might modify AI-generated content, potentially making it even harder to detect. Lastly, the study represents a snapshot in time, and both AI capabilities and detection methods are likely to advance quickly in the near future.

Discussion and Takeaways

In their discussion, the researchers emphasize several crucial takeaways. First and foremost, current detection methods are woefully inadequate – traditional plagiarism detection tools and human judgment simply aren’t up to the task of reliably identifying AI-generated content. This poses a significant threat to academic integrity, as the ease with which AI can produce high-quality, undetectable exam answers undermines the validity of current assessment methods. Consequently, universities may need to fundamentally reconsider how they evaluate student knowledge and skills in an AI-enabled world.

Rather than trying to eliminate AI use entirely, the researchers suggest that educators should explore ways to productively integrate AI into the learning process. They conclude by noting that as AI continues to advance, maintaining academic integrity will require constant vigilance and adaptation from educational institutions.

While AI undoubtedly poses significant challenges to current educational practices, it also presents exciting opportunities for innovation in teaching and learning. The key will be finding ways to harness AI’s potential while preserving the essential human elements of education.