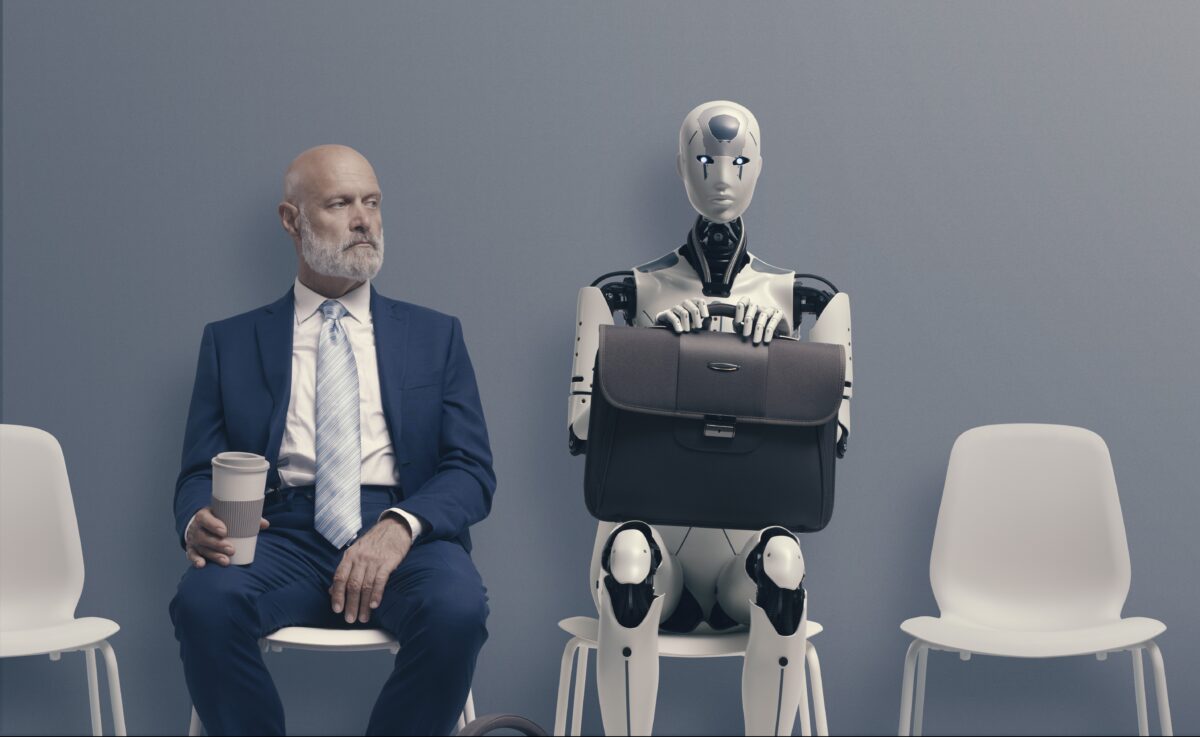

Are you sure you're actually talking to a real person on the other side of that video chat? (Photo by fizkes on Shutterstock)

In A Nutshell

- Call for stronger safeguards: Recruiters want platforms like LinkedIn to step in, new laws to require AI-use disclosures, and stricter live-interview and credential checks.

- AI fraud is flooding hiring: 72% of recruiters report encountering fake resumes, portfolios, or credentials created with AI.

- Deepfakes hit interviews: 15% of recruiters have seen face-swapping or voice-cloning during video interviews.

- Tech, marketing, and finance hardest hit: Recruiters say these industries face the greatest risk of AI-driven job fraud.

- Detection tools lag behind: Only 31% of companies use AI/deepfake detection software, despite most recruiters’ confidence in spotting fakes manually.

SPOKANE, Wash. — A survey of 874 hiring professionals reveals that artificial intelligence has invaded the job market in ways that would make even tech-savvy recruiters nervous. Nearly three-quarters of hiring managers say they’ve encountered AI-generated resumes, while 15% report seeing candidates use face-swapping technology during video interviews to manipulate their appearance in real time.

The research exposes a troubling reality: job fraud has gone high-tech, and most companies aren’t prepared to handle it.

Fake Everything: The Full Scope of AI Job Fraud

AI deception in hiring goes far beyond polished resumes. More than half of hiring professionals (51%) have spotted AI-created work portfolios, while 42% have identified fake references and 39% have found fake credentials or diplomas. Voice manipulation is also on the rise, with 17% of recruiters detecting voice filters or voice cloning attempts.

Despite these concerning numbers, only 20% of hiring professionals admit they lack confidence in detecting AI fraud without specialized software tools. This creates a dangerous blind spot where most recruiters believe they can spot fraudulent applications manually.

Half of all recruiters now view AI-enhanced resumes as fraudulent, leading nearly 50% to reject candidates based on suspected AI use. Another 40% have turned down applicants due to concerns about AI identity manipulation during interviews.

Tech Companies Are Prime Targets

The technology industry faces the highest risk, with 65% of hiring professionals identifying it as the most vulnerable sector for AI-driven job fraud. Marketing (49%) and creative/design roles (47%) follow closely behind, fields where portfolios and visual work can be easily fabricated using AI tools. Finance rounds out the top four at 42%.

Other sectors also face risks, though to a lesser degree. Government positions attract AI fraud 21% of the time, healthcare 19%, and education 15%.

Large companies with 250 or more employees face particular risk, according to nearly one-third of survey respondents. However, 35% believe organizations of all sizes are vulnerable to AI-driven applicant fraud.

The Detection Gap: Tools Don’t Match Confidence Levels

While hiring professionals express confidence in their detection abilities, only 31% of companies have actually implemented AI or deepfake detection software. Most organizations still rely on manual HR reviews (66%) and third-party background checks (53%) as their primary fraud prevention methods.

Other detection methods include biometric identity verification (27%), while a concerning 10% of companies use no detection tools at all.

The training gap makes the situation worse. Nearly half (48%) of HR professionals haven’t received any instruction on AI-driven hiring fraud, though 15% say their company has training plans in development.

Despite these shortcomings, change is coming. Almost 40% of companies plan to invest in detection tools within the next year, and more than half would pay extra for hiring platforms with built-in AI fraud detection.

Industry Pushes Back Against AI Fraud

Hiring professionals are demanding systemic changes to combat this growing threat. Two-thirds (65%) believe job platforms like LinkedIn and Indeed should be responsible for flagging AI-generated applicants. Even more (62%) support federal laws requiring job seekers to disclose if they’ve used AI in their application materials.

Many recruiters are ready for stricter verification processes. Nearly 65% would support mandatory “live only” interviews to validate candidate identities, while 54% favor enhanced credential and background verification.

The urgency is clear: 88% of survey respondents predict that AI hiring fraud will reshape the hiring process within the next five years. Recruiters see AI-based resume manipulation as a bigger long-term threat than deepfake video technology, with 63% considering resume fraud the greater risk compared to 37% who view video deception as more concerning.

Survey Methodology: Software Finder conducted and commissioned this research, surveying 874 HR professionals and recruiters about AI and deepfake technology in hiring. Respondents averaged 42 years old, with 50% female, 49% male, and 1% nonbinary participants. The survey data was collected to explore how AI and deepfakes are impacting the job market and hiring practices.