(Credit: Anna Tarazevich from Pexels)

In A Nutshell

- MIT researchers built a portable ultrasound device weighing just over one pound that images more than 4 inches deep into breast tissue, matching hospital machine performance with far less power

- The box-shaped array uses only 128 elements instead of the thousands required by conventional systems, achieved through a clever geometry that creates a “virtual” 1,024-element array

- A chirped signal processing method enables deep imaging at 18 volts instead of the 90+ volts typical hospital systems require, dramatically reducing power consumption

- First human test successfully located breast cysts previously identified by clinical ultrasound, though larger trials are needed to validate detection of unknown abnormalities and distinguish tissue types

One day in the not so distant future women may be able to monitor their breasts for changes as easily as taking blood pressure. In other words, no travel to a clinic, no technician, no uncomfortable squishing. MIT researchers just built a portable ultrasound device that weighs about as much as a pineapple, runs on a fraction of the power that hospital machines use, and can see more than four inches deep into breast tissue. In its first human test, researchers used it to spot cysts in a patient’s breast that a hospital machine had also detected.

The breakthrough solves a problem that’s stumped engineers for years: how to make ultrasound work when nobody’s moving the probe around. Clinical ultrasound requires a trained technician constantly adjusting the device to find abnormalities.

For the 40% of women with dense breast tissue, where mammograms often fail to spot cancer, this type of technology could prove valuable. The device captures detailed images that would still need review by medical professionals, but the portability and low power requirements could eventually enable more frequent monitoring between clinical screenings. Researchers envision a future where women could track tissue changes over time, potentially catching abnormalities earlier when they’re most treatable.

Why Your Phone Can’t Already Do This

Your smartphone has way more computing power than the Apollo missions, yet medical ultrasound still requires cart-sized machines costing hundreds of thousands of dollars. The reason comes down to a brutal physics problem.

Traditional ultrasound shoots sound waves into tissue and listens for echoes, like a bat navigating in the dark. But it only “sees” a paper-thin slice of tissue at a time: thinner than three stacked credit cards. To build a 3D picture, you’d need thousands of tiny speakers and microphones working together. Commercial hospital systems use up to 65,000 individual elements, each requiring its own electronics. That means miles of wiring, circuit boards the size of textbooks, and enough power draw to drain a car battery in minutes.

Engineers have tried various shortcuts. Some designs use fewer elements but only see a narrow “pencil beam” straight ahead (even more restrictive than the thin slice problem). Others sacrifice image quality, producing grainy pictures full of artifacts and ghost images. Previous attempts at portable ultrasound for breast monitoring have run into the same wall: make it small enough to wear, and it can’t see deep enough or wide enough to be useful.

The team at MIT, led by biomedical engineer Canan Dagdeviren, attacked both problems at once. First, they redesigned the array itself. Second, they rewrote the rulebook on how ultrasound signal processing works.

The Box That Sees Everything

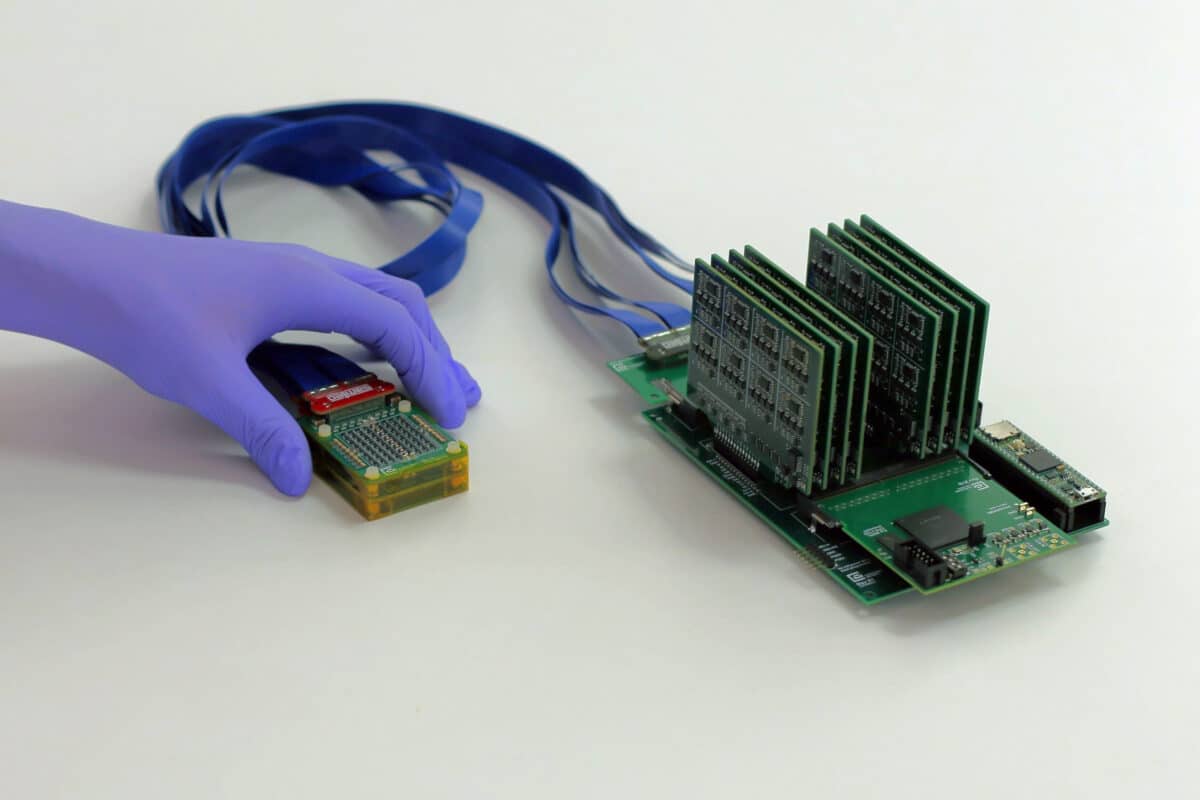

Instead of a flat panel packed with thousands of elements, Dagdeviren’s team built a box. Imagine a picture frame lying flat on your chest. The front and back sides have transmitters that send sound waves. The left and right sides have receivers that listen for echoes. This sounds simple, but the geometry creates something clever: even though the device only has 128 elements total (versus thousands in hospital machines), the way they interact creates a “virtual” array that performs like it has 1,024 elements.

Think of it like stereo vision. Your two eyes, positioned apart, let your brain construct 3D depth perception. The box array does something similar acoustically. The separated transmitters and receivers work together to build volumetric images from far fewer physical pieces.

The device captures a cone of tissue 57 degrees wide, roughly the field of view of your eye. That’s broad enough that small positioning errors don’t matter. If the patch shifts a bit while you’re wearing it, abnormalities stay visible somewhere in the cone. The system successfully imaged targets more than 11 centimeters deep, over four inches into tissue.

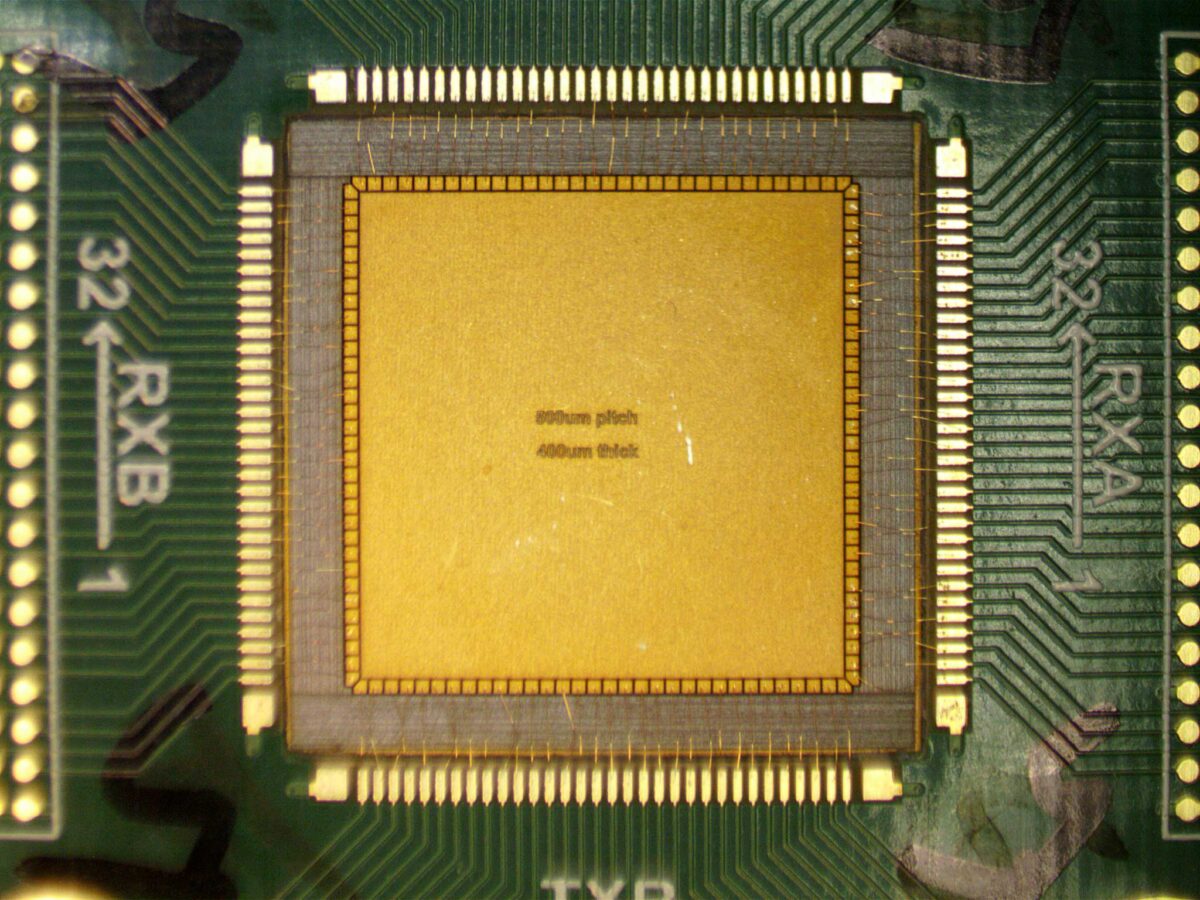

Building the array required shooting an ultrafast laser at piezoelectric ceramic (the material that converts electricity to sound waves) to carve out the unusual shapes. The entire cutting process takes less than ten minutes. Three layers of acoustic matching material coat the bottom surface, helping sound waves transition smoothly from the electronics into tissue.

The Whisper Problem

Sparse arrays with few elements fundamentally have weak signals. Akin to trying to hear a whisper in a noisy restaurant. The standard solution is to either shout louder (increase voltage) or ask the person to repeat themselves hundreds of times until you catch every word (averaging multiple measurements).

Neither option works for a portable device. High voltage is dangerous and drains batteries fast. Averaging hundreds of measurements means either slow image updates or massive power consumption from processing all that data.

The MIT team’s solution, published in Advanced Healthcare Materials, involves changing the conversation entirely. Instead of sharp “pings” of sound (like sonar), their system uses a chirp: a tone that sweeps from low to high frequency over several milliseconds. This packs more energy into tissue without increasing peak intensity, like the difference between tapping a drum once versus rolling on it.

The receive side does something equally unusual. Most ultrasound systems capture the raw high-frequency echoes directly, requiring electronics that sample millions of times per second. The new system instead shifts those high frequencies down to near-audio range before sampling, like pitch-shifting a soprano down to baritone. This lets them use simpler, lower-power electronics that sample 100 times slower.

To test the approach, the team compared their chirped signal processing method against a commercial Verasonics system using the same basic array design. The chirped method achieved similar imaging depth using just 5 volts of drive, while the pulsed commercial system required 92 volts to match that performance. The actual CODA box array operates at 18 volts, chosen as the maximum voltage before signal interference between transmit and receive elements becomes problematic. This still represents dramatically lower power requirements than conventional systems while achieving imaging depths exceeding 11 centimeters.

What It Saw in a Real Patient

The team tested the system on a 71-year-old woman with known breast cysts, conducting the imaging session at MIT’s clinical research center. Researchers positioned the device and captured images showing fluid-filled cysts about an inch deep in her breast tissue, the same cysts a clinical ultrasound had previously identified.

Unexpectedly, the portable device’s images showed the cysts as deeper and more spherical than the hospital machine’s images. Why? The commercial handheld probe, with its small contact area, squishes breast tissue significantly when the technician presses it against the skin. That compression flattens cysts and moves them closer to the surface. The box array’s larger, flatter surface spreads pressure more evenly, causing less distortion.

This matters more than it might seem. If doctors are tracking suspicious tissue over months, they need consistent measurements. But if each scan compresses tissue differently (depending on who’s holding the probe and how hard they’re pushing) features might appear to change size when they’re actually unchanged. A device that causes minimal deformation could give more reliable tracking over time.

The system updates images in real-time, showing 3D volumes four times per second or 2D slices up to 30 times per second. The complete setup, including the array, cable, and electronics, weighs 1.15 pounds. Right now it needs a laptop with a graphics card to process images, but the data rates are low enough that future versions could do everything on a smartphone chip.

What This Could Actually Mean

Dense breast tissue affects many women, and it’s especially common in younger women. Mammography struggles with dense breasts because both tumors and dense tissue appear white on X-rays. Ultrasound sees through dense tissue easily, but current systems require clinical visits with trained operators.

The technology could potentially support more frequent monitoring between regular clinical screenings. While the device captures detailed images, medical professionals would still need to interpret them and determine appropriate follow-up. The study doesn’t demonstrate the device’s ability to distinguish cancerous tumors from benign tissue or normal variations. That would require much larger clinical trials.

Still, the portability and low power requirements open interesting possibilities. In resource-limited settings, both rural areas and developing countries often lack mammography equipment and trained ultrasound technicians. A system requiring less operator skill could expand access to basic breast imaging where none currently exists.

There are still refinements needed. The system struggles within the first half-inch or so of tissue depth due to signal interference between adjacent elements. Some images show slight blurring compared to computer predictions, probably from subtle imperfections in how components were assembled. And human trials so far involve just one patient. The device successfully located pre-identified cysts, but hasn’t been tested for detecting previously unknown abnormalities or distinguishing different tissue types.

The fundamental breakthrough is real, though. The team proved you can do sophisticated 3D ultrasound imaging with minimal power, minimal size, and far fewer components than conventional systems. That opens the door to future devices that could bring imaging capabilities beyond hospital settings.

For Canan Dagdeviren, the work is personal. Her aunt died of breast cancer in 2015, inspiring her to focus on breast imaging technology. “Frequent monitoring could catch changes early,” she says, “when treatment options are most effective.”

The research was funded by the National Science Foundation, 3M, and the Lyda Hill Foundation. The team is exploring next steps toward eventual FDA approval and commercialization.

DISCLAIMER: This technology is currently in the research stage and has not been approved by the FDA or any regulatory body for home use or clinical diagnosis. The device has been tested on only one human subject with pre-identified breast cysts in a controlled research setting. Images captured by this device require interpretation by trained medical professionals. This technology does not diagnose cancer and should not replace mammography, clinical breast exams, or other screening methods recommended by healthcare providers. Readers should consult their physicians regarding appropriate breast health monitoring and screening protocols.

Paper Notes

Study Limitations

The system performs poorly within about 1 centimeter of the array surface due to signal interference between transmit and receive elements. Image quality in some experiments fell slightly short of computer simulations, likely due to subtle assembly variations and unequal electrical loading on array elements. Human testing involved only one patient with pre-identified cysts, imaged by researchers in a clinical setting—larger trials across diverse breast tissue types and pathologies will be needed to establish diagnostic accuracy for detecting different abnormality types and distinguishing benign from malignant tissue. The device does not provide autonomous interpretation and would require clinical review of captured images. The current prototype requires an external laptop with graphics processing unit for real-time image reconstruction. Near-field performance could improve with better shielding of exposed elements and optimized backing materials, though this introduces fabrication challenges with the gold nanowire interconnects. Alternative array geometries satisfying the CODA principle have not been systematically explored and might offer different performance trade-offs for specific applications. Longitudinal monitoring studies have not been performed to validate tumor tracking capabilities or growth rate detection.

Funding and Disclosures

This work was supported by NSF CAREER: Conformable Piezoelectrics for Soft Tissue Imaging (Grant Number 2044688), 3M Non-Tenured Faculty Award, The Lyda Hill Foundation, and MIT Media Lab Consortium funding. Authors declare no conflicts of interest. Human subject research followed protocols approved by the Committee on the Use of Humans as Experimental Subjects at MIT (COUHES, Protocol Number: 2011000271A007) with informed consent. Clinical study was conducted at MIT Center for Clinical and Translational Research with assistance from clinical research nurse coordinators.

Publication Details

Marcus, Colin, Md Osman Goni Nayeem, Aastha Shah, Jason Hou, Shrihari Viswanath, Maya Eusebio, David Sadat, Anantha P. Chandrakasan, Tolga Ozmen, and Canan Dagdeviren. “Real-Time 3D Ultrasound Imaging with an Ultra-Sparse, Low Power Architecture.” Advanced Healthcare Materials (2026). DOI: 10.1002/adhm.202505310. Received October 24, 2025; Revised January 13, 2026; Accepted January 22, 2026. Published online January 22, 2026. Open access article under Creative Commons Attribution License. Research conducted at Media Lab and Department of Electrical Engineering and Computer Science, Massachusetts Institute of Technology, Cambridge, Massachusetts, and Division of Surgical Oncology, Massachusetts General Hospital, Harvard Medical School, Boston, Massachusetts. Correspondence: Canan Dagdeviren (canand@media.mit.edu). Colin Marcus and Md Osman Goni Nayeem contributed equally to this work.