Twelve toys were examined in a study on smart toys and privacy. (Credit: University of Basel / Céline Emch)

BASEL, Switzerland — Remember when toys were simple? A stuffed animal was just a stuffed animal, and the only data it collected was the occasional ketchup stain or grass mark from outdoor adventures. But in today’s digital age, your child’s favorite playmate might be secretly moonlighting as a miniature surveillance device, collecting data on everything from playtime habits to personal preferences.

Welcome to the brave new world of smart toys, where every playtime could be a potential privacy pitfall. An eye-opening new study by researchers from the University of Basel uncovers alarming shortcomings in the privacy and security features of popular smart toys, raising concerns about the safety of children’s personal information.

The study, published in the journal Privacy Technologies and Policy, examined 12 smart toys available in the European market. These included toys equipped with internet connectivity, microphones, cameras, and the ability to collect and transmit data. They include household names like the Toniebox, tiptoi smart pen and its optional charging station, and the ever-popular Tamagotchi. Think of them as miniature computers disguised as playful companions.

At first glance, these high-tech toys seem like a parent’s dream. Take the Toniebox, for instance. This clever device allows even the youngest children to play their favorite stories and songs with ease – simply place a figurine on the box, and voila! The tale begins. Tilt the box left or right to rewind or fast-forward. It’s so simple that even a toddler can master it.

Here’s where the plot thickens: while your little one is lost in the world of Peppa Pig, the Toniebox is busy creating a digital dossier. According to the study, It meticulously records when it’s activated, which figurine is used when playback stops, and even tracks those rewinds and fast-forwards. All this data is then whisked away to the manufacturer, painting a detailed picture of your child’s play patterns.

The Toniebox isn’t alone in its data-gathering ways. The study found that many smart toys are collecting extensive behavioral data about children, often without clear explanations of how this information will be used or protected. It’s like having a constant surveillance system watching your child’s every move during playtime.

“Children’s privacy requires special protection,” emphasizes Julika Feldbusch, first author of the study, in a statement.

She argues that toy manufacturers should place greater weight on privacy and on the security of their products than they currently do in light of their young target audience. The study also found that most toys lack transparency when it comes to data collection and processing. Privacy policies, when they exist at all, are often vague, difficult to understand, or buried in fine print. This means parents are often in the dark about what information is being collected from their children and how it’s being used.

Security measures were also in need of improvements. While most toys use encryption for data transmitted over the Internet, local network connections – like those used during initial setup – were often unencrypted. Some popular toys, including the Toniebox and tiptoi’s optional charging station were found to have inadequate encryption for data traffic, potentially leaving children’s information vulnerable to interception. The actual tiptoi pen does not record how and when a child uses it. Only audio data for the purchased products is transferred.

In some cases, researchers were able to intercept Wi-Fi passwords and other sensitive information simply by eavesdropping on these local connections.

Perhaps most alarmingly, when the researchers attempted to exercise their rights under Europe’s General Data Protection Regulation (GDPR) by requesting access to the data collected about them, only 43% of toy vendors responded within the legally mandated one-month period. Even then, some of the responses were incomplete or unsatisfactory.

Moreover, many companion apps for these toys were found to request unnecessary and invasive permissions.

“The apps bundled with some of these toys demand entirely unnecessary access rights, such as to a smartphone’s location or microphone,” says Professor Isabel Wagner of the Department of Mathematics and Computer Science at the University of Basel.

In the wrong hands, the data collected by these toys could be used for identity theft, targeted advertising, or even more sinister purposes like child grooming or blackmail. And because children are particularly vulnerable and may not understand the implications of sharing personal information, the onus is on toy manufacturers and parents to ensure their safety in the digital playground.

“We’re already seeing signs of a two-tier society when it comes to privacy protection for children,” says Feldbusch. “Well-informed parents engage with the issue and can choose toys that do not create behavioral profiles of their children. But many lack the technical knowledge or don’t have time to think about this stuff in detail.”

So, what can parents do to protect their tech-savvy tots? The researchers suggest looking for toys that prioritize privacy and security, reading privacy policies carefully (even if they’re boring), and being cautious about granting unnecessary permissions to toy apps. They also recommend that toy makers implement stronger security measures and provide more transparent information about data collection practices.

Prof. Wagner acknowledges that individual children might not experience immediate negative consequences from these data collection practices.

“But nobody really knows that for sure,” she cautions. “For example, constant surveillance can have negative effects on personal development.”

The study serves as a wake-up call not just for parents, but for regulators and toy manufacturers as well. As smart toys become increasingly prevalent, it’s crucial that we find a balance between innovation and protecting our children’s privacy and security.

The researchers hope their work will lead to improved standards and practices in the smart toy industry. In the meantime, parents might want to think carefully about whether that internet-connected teddy bear is really worth the potential risks to their child’s privacy and safety.

Editor’s Note: The University of Basel informed StudyFinds that a portion of their press release did not include important findings regarding the tiptoi pen and its optional charging station. “Not only the tiptoi pen but also its optional charging station was tested in the study. The insufficient encryption of data traffic concerns the optional charging station of the pen,” a university official said in an email to StudyFinds. This has been noted and update in our story.

Ravensburger, the manufacturer of Tiptoi, also contacted StudyFinds regarding the omission and issued the following statement:

“The University of Basel published a press release about a study according to which smart toys – including the tiptoi pen – enable unsafe data traffic in the children’s room and sometimes also collect data about children’s behaviour. These statements are false in the case of tiptoi. What is true, however, is that neither the tiptoi pen nor its charging station collect data or create user profiles.

“In the study by the University of Basel, it was not the pen but the optional charging station that was evaluated. This can only be used with the fourth generation of tiptoi pens. The vast majority of customers download their audio files via PC, so the study does not affect most tiptoi owners.

“One point of criticism in the study is that some smart toys transfer data unencrypted. However, tiptoi does not transfer any private data, only the audio files and updates for tiptoi products that are publicly available.

“The study also criticised the process of setting up the charging station’s internet connection. During this process, which takes a few minutes, the access data is sent unencrypted to the charging station – as is the case with the setup of many other electronic devices. Someone within a radius of 25 metres could theoretically access the access data at this moment. Although the study estimates the risk to be extremely low, Ravensburger is working on offering an alternative transmission method.”

Paper Summary

Methodology

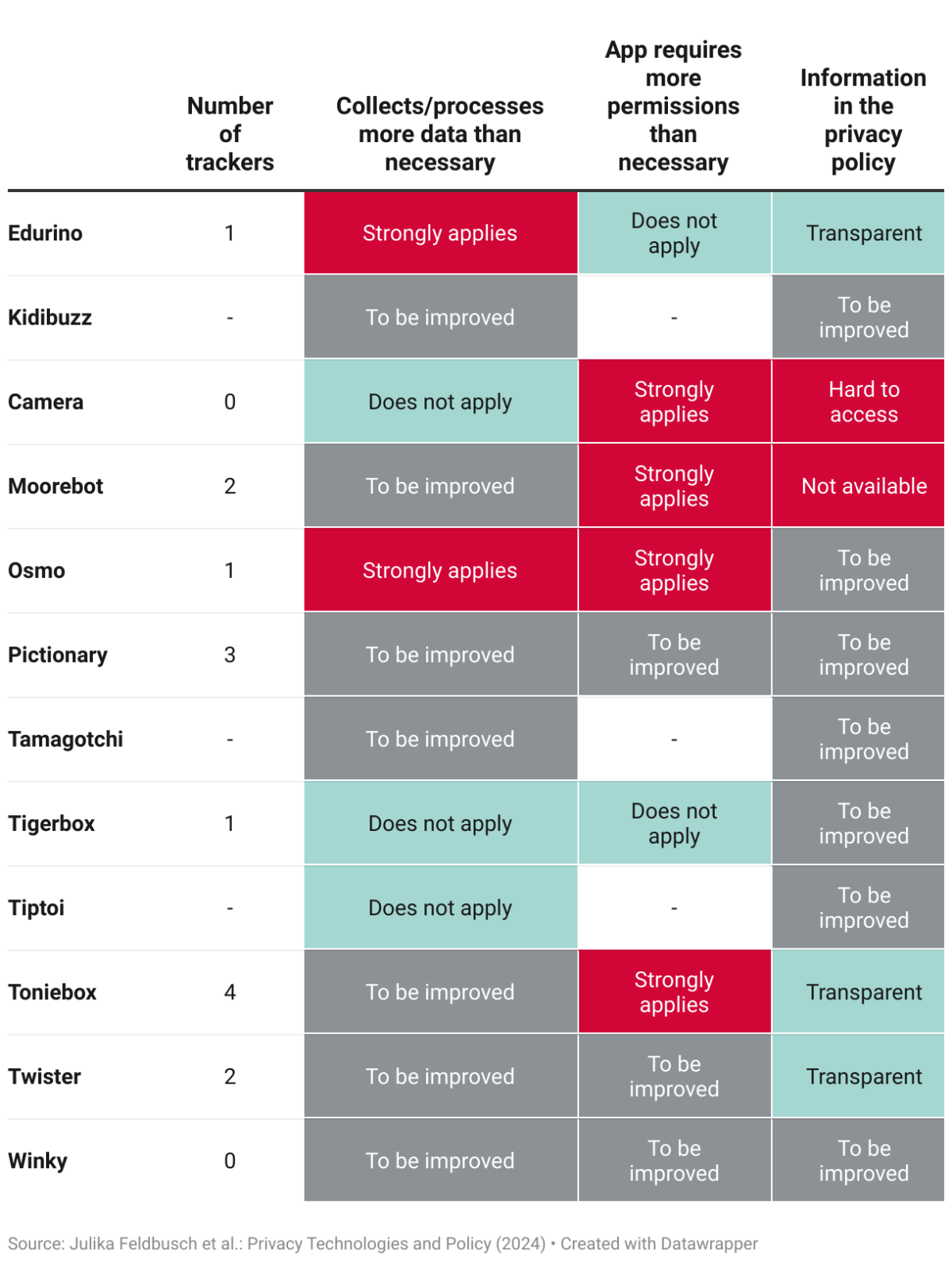

The researchers employed a comprehensive approach to evaluate 12 diverse smart toys available on Amazon.de, focusing on those with Wi-Fi capabilities. They developed evaluation criteria based on established cybersecurity standards for consumer IoT devices. Their assessment methods included decrypting and analyzing network traffic between the toys and their servers, examining the security of Wi-Fi setup processes, and performing static analysis of companion apps to identify requested permissions and embedded trackers.

The team also reviewed privacy policies and terms of service and sent subject access requests to toy vendors to test compliance with data protection regulations. This multi-faceted approach provided a thorough understanding of each toy’s security, privacy, and transparency features.

Key Results

The study revealed significant concerns across multiple areas. In terms of security, while most toys used encryption for internet communications, local network connections were often unprotected. Some toys had vulnerabilities in their Wi-Fi setup processes that could allow attackers to intercept sensitive information. Privacy issues were prevalent, with many toys collecting extensive analytics data and unique identifiers, enabling detailed behavioral profiling of children.

Companion apps often request unnecessary and sensitive permissions. Transparency was lacking, with privacy policies frequently vague or difficult to access. Only 43% of vendors responded adequately to subject access requests within the required timeframe. Most toys fell short of full compliance with the GDPR and the upcoming Cyber Resilience Act, particularly in areas of data minimization and user rights.

Study Limitations

The study has several notable limitations. The sample size of 12 toys may not be fully representative of the entire smart toy market. The research was conducted at a single point in time, preventing the tracking of changes in data collection practices over time. The team did not attempt to extract or reverse engineer device firmware, which could have revealed additional insights.

Additionally, the study’s focus on toys available in the European market means the findings may not be fully applicable to other regions with different regulations and practices.

Discussion & Takeaways

The researchers emphasize the critical need for toy makers to prioritize privacy and security, adhering to best practices in security and privacy engineering. They advocate for easier subject access request processes and more granular consent options for users. The study suggests implementing standardized privacy and security labels for toy packaging, similar to nutrition labels on food products.

For parents, the key takeaway is the need for increased vigilance when purchasing and using smart toys, including carefully reading privacy policies and being cautious about granting app permissions. The research community and regulators are called upon to provide more support for toy makers, potentially through the development of standardized guidelines or certification processes for smart toys.

Funding and Disclosures

The research does not mention specific funding sources, and the authors declare no competing interests relevant to the study’s content. The research was conducted by academic researchers at the University of Basel, suggesting it was likely funded through standard university research channels rather than by industry or specific interest groups.