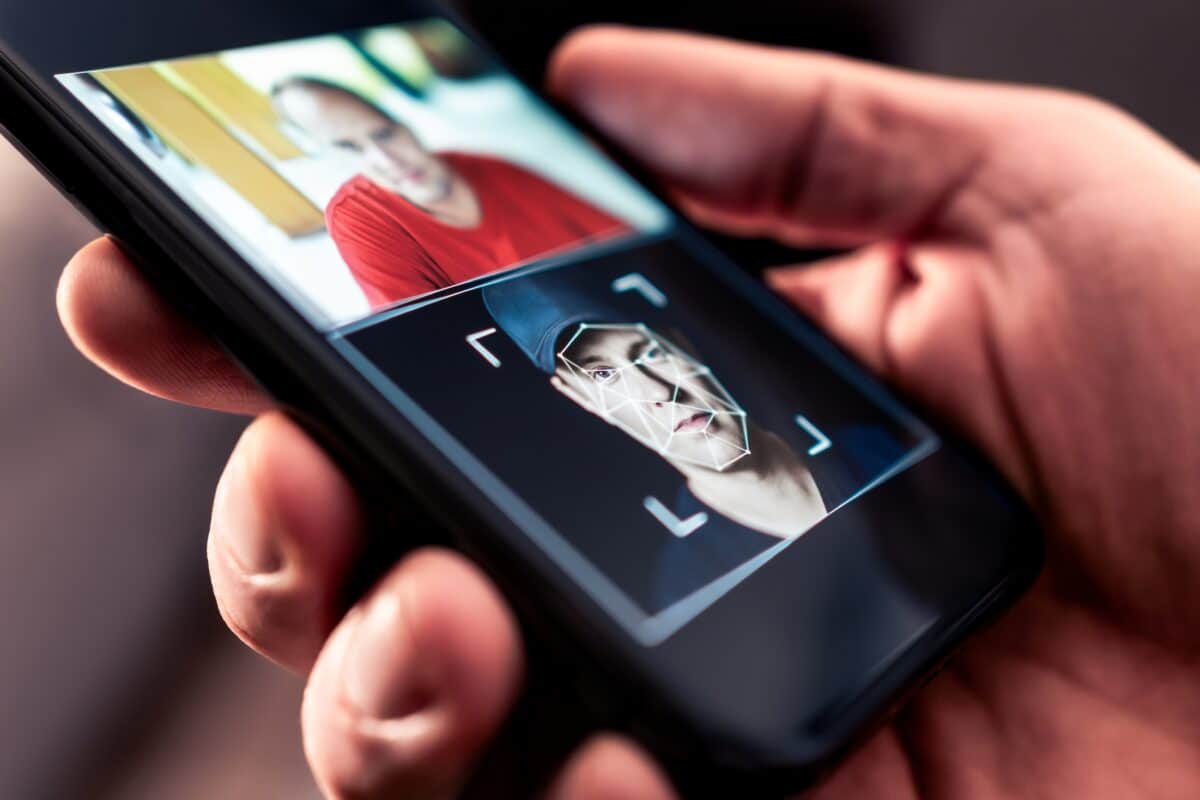

AI-generated faces are becoming more and more common. (Credit: Tero Vesalainen on Shutterstock)

Learning how to detect AI-generated faces is five minutes well spent in today’s increasingly synthetic world.

In A Nutshell

- Most people perform worse than random guessing when trying to identify AI-generated faces, often perceiving fake faces as more realistic than real ones

- People with exceptional face recognition skills (super-recognizers) naturally perform better at detecting synthetic faces than typical observers

- Just five minutes of training showing common AI rendering flaws—like oddly rendered hair or incorrect tooth counts—significantly improved detection accuracy for both super-recognizers and typical participants

- The training effects appeared across most synthetic faces tested, not just obvious fakes, suggesting practical real-world applications for social media moderation and identity verification

Spotting a fake face created by artificial intelligence might sound like a job for advanced algorithms, but research suggests humans can learn to do it surprisingly well with just five minutes of training.

A study from the University of Reading and colleagues found that a brief training session (about five minutes of instruction and practice with feedback) showing people common flaws in AI-generated faces boosted detection rates significantly. Additionally, the research revealed that most people without training perform worse than random guessing when trying to identify synthetic faces, often perceiving them as more realistic than actual human photographs.

The findings offer a practical solution to a growing security threat. AI-generated faces have already been used to create fake social media accounts for harassment campaigns, and as the technology improves, the potential for fraud and misinformation grows.

“Synthetic faces are now extremely realistic, to the extent that human observers are highly error-prone at discriminating them from real faces,” the researchers wrote in Royal Society Open Science.

Testing People with Exceptional Face Recognition Skills

The study tested 664 participants across multiple experiments, including people with exceptional face recognition abilities called super-recognizers. These individuals are already employed by some police forces to identify suspects from security footage.

Participants viewed faces created by StyleGAN3, the most advanced face-generation system available at the time of data collection. The synthetic images were paired with real photographs from Flickr, with both sets including diverse demographics to reflect realistic conditions.

Without training, typical participants performed at just 42% accuracy when asked to identify which of two faces was AI-generated—significantly worse than the 50% expected from random guessing. Super-recognizers fared better at 54%, but still showed room for improvement.

What the Training Involved: Practice with Feedback

The training intervention was remarkably simple. Researchers showed participants examples of rendering artifacts—subtle flaws that AI systems sometimes leave in generated images. These included oddly rendered hair, unusual numbers of teeth, or strange transitions where facial features meet the background. Participants then completed ten practice trials and received immediate feedback on whether their answers were correct.

After this brief session, super-recognizers jumped to 64% accuracy, performing significantly better than chance. Typical participants also improved substantially, reaching chance-level performance and eliminating the tendency to perceive fake faces as more real than genuine ones.

The training effects appeared across most images in the study rather than benefiting only a few obvious fakes. Around 55 of the 80 synthetic faces showed accuracy improvements of more than 10% after training for super-recognizers.

Why Some People Are Better at Spotting Fakes

Interestingly, super-recognizers took longer to make their decisions than typical participants, but this didn’t explain their performance advantage. Response time didn’t correlate with accuracy within either group, suggesting the super-recognizers’ edge comes from superior perceptual abilities rather than simply being more careful.

The research team suggested that because training improved both groups similarly, super-recognizers may rely on face-processing abilities beyond simply detecting rendering artifacts. Instead, they likely use other face-processing skills to detect synthetic faces and can enhance these abilities through targeted instruction.

The study used signal detection theory to analyze whether improved performance came from better discrimination ability or simply changed decision-making strategies. The training improved actual sensitivity rather than just making people more suspicious of all faces.

Practical Uses for Detecting Fake Faces Online

The practical applications extend beyond security and law enforcement. As AI-generated faces become more prevalent, brief training programs could help social media moderators, journalists verifying sources, or anyone needing to authenticate online identities.

The researchers acknowledged that as AI systems improve beyond StyleGAN3 (which was state-of-the-art when they collected their data), obvious rendering artifacts may become rarer, potentially reducing the effectiveness of artifact-focused training. However, combining brief training with the natural abilities of super-recognizers could provide a strong human defense against increasingly sophisticated synthetic faces.

The next step is determining whether the training effects last over time and whether they can be combined with other interventions, such as having multiple trained observers review the same images. The researchers also suggested that trained super-recognizers could work alongside AI detection algorithms for optimal results.

For now, the message is clear: humans aren’t helpless against AI-generated faces. With just a few minutes of instruction on what to look for, people can significantly improve their ability to spot fakes in an increasingly synthetic digital world.

Disclaimer: This article summarizes research published in a peer-reviewed scientific journal. It is intended for general information purposes and should not be considered professional advice for security, law enforcement, or identity verification applications. For specific concerns about AI-generated faces or identity verification, consult appropriate professionals in cybersecurity or digital forensics.

Paper Summary

Methodology

The researchers conducted two main experiments with both trained and untrained versions. In Experiment 1, participants viewed individual faces and indicated whether each was “real” or “not real” (AI-generated). In Experiment 2, participants viewed two faces simultaneously—one real and one synthetic—and selected which was AI-generated. The study recruited 664 total participants across all conditions, including super-recognizers (people who score at least 2 standard deviations above average on three face recognition tests), database controls from previous face recognition studies, and general population controls from Prolific. Stimuli consisted of 80 real faces from the Flickr-Faces-HQ Dataset and 80 synthetic faces generated using StyleGAN3, balanced for apparent gender and ethnicity. For training conditions, participants first viewed six synthetic faces with obvious rendering artifacts (such as poorly rendered hair or incorrect tooth counts), then completed 10 practice trials with immediate feedback before proceeding to the main task. All experiments were conducted online using Gorilla Experiment Builder.

Results

Without training, super-recognizers achieved 54% accuracy in the two-alternative forced choice task, while both control groups performed at 42%—significantly below the 50% chance level. In the single-face detection task, super-recognizers performed at chance (41% correctly identifying synthetic faces), while controls performed significantly below chance (around 30%), demonstrating AI hyperrealism. After training, super-recognizers improved to 64% accuracy and controls reached chance-level performance. Using signal detection theory analysis, researchers found that training improved actual sensitivity rather than simply changing decision criteria. Super-recognizers showed significantly better sensitivity than controls in all conditions. Response times were slower for super-recognizers than for one control group but didn’t correlate with accuracy within groups. The training effect appeared across the majority of images rather than benefiting only obvious cases—55 of 80 synthetic faces showed more than 10% accuracy improvement for super-recognizers after training.

Limitations

The study used StyleGAN3, which was state-of-the-art at data collection but will be superseded by newer systems. As generative AI improves, rendering artifacts may become less obvious, potentially reducing the effectiveness of artifact-focused training. The study didn’t assess whether training effects persist over time or generalize to faces generated by different AI systems. Sample sizes for some subgroups (particularly database controls in Experiment 2) fell slightly below the target of 60 participants. The super-recognizer screening procedures, while rigorous, included some tests that are no longer considered optimal (such as the Glasgow Face Matching Test). Participants completed the study online, which allowed less experimental control than laboratory settings, though previous research has validated online cognitive testing. The study included faces of different ethnicities but wasn’t designed to systematically investigate demographic effects on detection accuracy.

Funding and Disclosures

Katie L.H. Gray was supported by an award from the Leverhulme Trust (RPG-2024-245). The authors declared no competing interests. All participants gave informed consent, and ethical clearance was granted by the local ethics committee (project code: 2024-016-KG). The super-recognizers and database controls were unpaid volunteers from the Greenwich Face and Voice Recognition Laboratory database, while Prolific controls were compensated £3 or £3.50 depending on the task version.

Publication Details

Gray, K.L.H., Davis, J.P., Bunce, C., Noyes, E., & Ritchie, K.L. (2025). “Training human super-recognizers’ detection and discrimination of AI-generated faces,” published November 12, 2025 in Royal Society Open Science, 12, 250921. DOI:10.1098/rsos.250921