Researchers have developed a wearable, comfortable and washable device called Revoice that could help people regain the ability to communicate naturally and fluently following a stroke, without the need for invasive brain implants. (Credit: University of Cambridge)

In A Nutshell

- A wearable “intelligent throat” device allows stroke patients with dysarthria to communicate by silently mouthing words—no sound required

- The smart choker achieved 96% word accuracy and 97% sentence accuracy in tests with five patients using a 47-word vocabulary

- AI detects emotional cues from pulse patterns and can optionally expand short phrases like “We go hospital” into complete, natural sentences

- Unlike brain implants, this comfortable fabric sensor runs on a day-long battery with just one-second delay from thought to synthesized speech

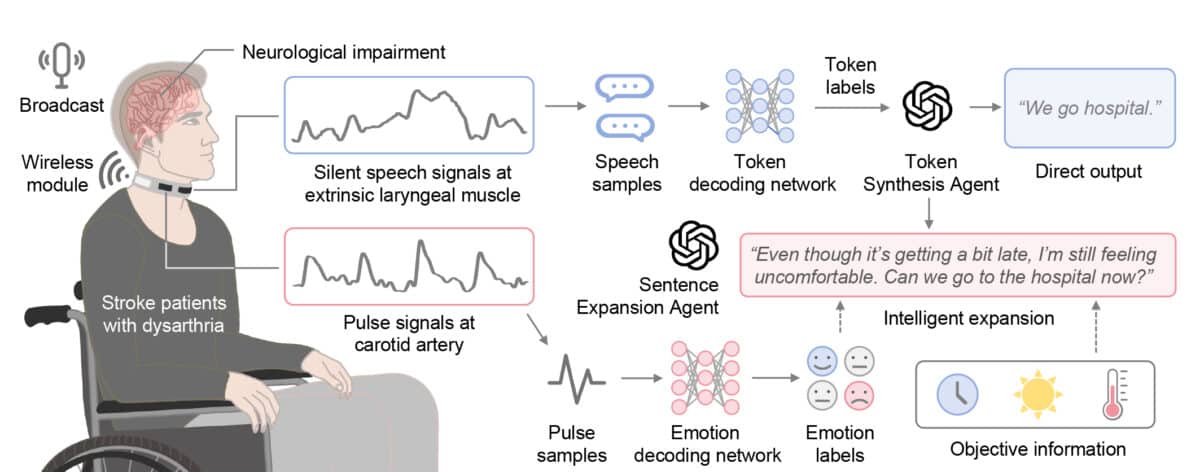

Scientists have developed a smart choker that translates silent speech into natural-sounding sentences for stroke patients who’ve lost the ability to speak clearly. The device decodes words, picks up emotional cues from pulse patterns, and can expand fragmentary thoughts into complete expressions, if thats what the user wants.

The wearable system, called the Intelligent Throat (IT), could transform life for people with dysarthria, a motor-speech disorder that frequently results from stroke and compromises neuromuscular control over the vocal tract. Stroke patients often struggle to form words even when they know exactly what they want to say, leading to isolation, frustration, and depression.

Researchers from the University of Cambridge and Beihang University tested the device on five stroke patients using a 47-word vocabulary of common phrases. In this focused test, the system correctly decoded 95.8% of words and 97.1% of complete sentences while tagging speech with emotional context to make communication feel more natural. Users reported 55% higher satisfaction compared to basic word-by-word output.

“This work establishes a portable, intuitive communication platform for patients with dysarthria with the potential to be applied broadly across different neurological conditions and in multi-language support systems,” the researchers write in Nature Communications.

How Revoice Detects Silent Speech

The device works by sensing subtle vibrations in the throat muscles and pulse at the carotid artery. Users silently mouth words without making sounds, and sensors printed on an elastic fabric band capture the tiny movements. A small wireless circuit transmits the data to artificial intelligence that interprets both speech and emotional cues.

Previous silent speech devices forced users to pause between words. The IT analyzes speech continuously in rapid 100-millisecond segments, creating a fluid experience closer to natural conversation. The fabric sensors are exceptionally sensitive, responding to deformations as small as 0.1%, yet comfortable enough to wear all day on a battery that lasts from morning to night.

AI Technology Completes Thoughts and Adds Emotion

Beyond decoding words, the system uses a large language model (an AI text tool similar to those behind chatbots) to correct errors and, when the user chooses, expand short phrases into complete sentences. When a patient silently mouths brief fragments like “We go hospital” (which requires less exhausting effort), they can opt to have the system transform it into something fuller: “Even though it’s getting a bit late, I’m still feeling uncomfortable. Can we go to the hospital now?”

This optional expansion takes into account the patient’s emotional state detected from pulse patterns, time of day, and patient-provided examples of how they prefer to communicate. Patients switch between direct word-for-word output and these enriched sentences with a simple head gesture. Since stroke patients often experience severe fatigue from forming words (even silently), short expressions allow them to communicate their intent without physical strain.

The system detects three emotional states most common in stroke recovery: neutral, relieved, and frustrated. By analyzing pulse signal patterns that correlate with autonomic nervous system changes, it can tag speech with one of these emotional tones with 83.2% accuracy.

Testing Shows High Accuracy for Stroke Survivors

Researchers first taught the system patterns from 10 healthy volunteers, then fine-tuned it for each patient. In learning tests, they found that performance jumped significantly after about 25 repetitions per word, showing the system could adapt quickly to individual speech patterns affected by stroke.

Five stroke patients, averaging 43 years old with varying degrees of speech impairment, tested the system through multiple sessions. All retained enough muscle control to mouth words silently. Beyond measuring accuracy, researchers evaluated whether the expanded sentences felt natural and captured intended meaning. Patients rated the system on completeness, fluency, personalization, and emotional accuracy, with the AI-enhanced output scoring higher than basic word transcription across all measures.

Total delay from silent articulation to synthesized speech output is approximately one second, fast enough for seamless back-and-forth conversation.

Revoice Goes Beyond Stroke Recovery

The technology could benefit people with various neurological conditions affecting speech, including ALS and Parkinson’s disease. Unlike brain-computer interfaces requiring surgical implantation, this approach uses comfortable, non-invasive sensors suitable for daily wear.

Researchers are expanding trials to include more participants with diverse stroke symptoms and language backgrounds. Future versions will incorporate flexible circuit boards to reduce weight and may add sensors for more robust emotion detection in various settings.

The system moves away from assistive communication devices that spell out letters one by one or require users to select pre-written phrases. Users can express themselves naturally while AI handles the complex task of interpretation and enhancement.

For rehabilitation, the ability to communicate effectively helps patients engage more actively with therapists and family. This social connection supports both physical recovery and mental health during the challenging post-stroke period.

The research demonstrates how combining sensitive wearable sensors with modern AI can restore capabilities once thought permanently lost after brain injury. As the technology matures, it may become as commonplace as hearing aids. In other words, a discreet tool that gives people back something essential to human connection.

Paper Summary

Limitations

The study involved only five stroke patients and a limited 47-word Chinese vocabulary. The emotion detection relies solely on pulse signals, which may be less robust under varied physical activity levels (though stroke patients typically have limited mobility). The system currently requires training on individual patients. Long-term studies are needed to assess device durability, signal stability as patients’ conditions change, and effectiveness across broader populations.

Funding and Disclosures

The research was supported by the National Natural Science Foundation of China (62171014), Beihang Ganwei Project (JKF-20240590), British Council (45371261), UK Engineering and Physical Science Research Council (EPSRC EP/K03099X/1, EP/W024284/1), and Haleon through the CAPE partnership contract at the University of Cambridge (G110480). The authors declare no competing interests.

Publication Details

Chenyu Tang, Shuo Gao, Cong Li, et al. “Wearable intelligent throat enables natural speech in stroke patients with dysarthria.” Published January 19, 2026 in Nature Communications 17, 293. DOI: 10.1038/s41467-025-68228-9. Authors are affiliated with the University of Cambridge Department of Engineering (UK), Beihang University School of Instrumentation and Optoelectronic Engineering (China), Beijing Tsinghua Changgung Hospital Department of Rehabilitation Medicine (China), University College London, and other institutions.