(© Tatiana Shepeleva - stock.adobe.com)

NEW YORK — Body image is something every person develops as they grow. Getting to know our bodies is something that helps people in all aspects of life, from moving, to getting dressed, to knowing when there’s an injury hindering our abilities. Now, a team from Columbia Engineering have created the first robot that can imagine itself as well.

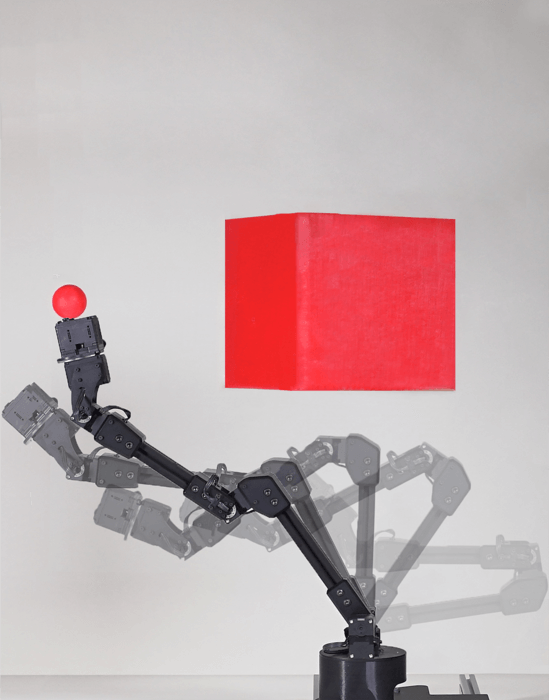

Researchers note that humans acquire the knowledge of their own body model as a baby. Their new robot does the same. Without any help from the researchers, their robot created a kinematic model of itself, which it used to plan out motions, reach goals, and avoid obstacles in a number of different situations.

The robot was even capable of recognizing and compensating for damage that slowed down its performance. In a sense, the robot became self-aware.

Having fun in the mirror

Study authors say they accomplished this by placing the robot in the middle of a circle of five streaming cameras. The experiment was similar to placing a child in a hall of mirrors and letting them explore what all of their features and movements look like.

Just like a child, the robot wiggled and contorted itself to learn what it looks like as it performed various motor commands. After three hours, the robot came to a stop, signaling that its deep neural network had finished learning about the connection between its motor actions and the space it takes up while performing them.

“We were really curious to see how the robot imagined itself,” says Hod Lipson, professor of mechanical engineering and director of Columbia’s Creative Machines Lab, in a media release. “But you can’t just peek into a neural network, it’s a black box.”

“It was a sort of gently flickering cloud that appeared to engulf the robot’s three-dimensional body,” Lipson continues. “As the robot moved, the flickering cloud gently followed it.”

Researchers note that the robot’s body image was accurate to within one percent of its workspace.

Why do robots need to know about body image?

Columbia engineers say giving robots the ability to imagine themselves without help could be an important step forward in automation. With more and more jobs now relying on robots, a machine that can keep track of its own wear-and-tear or detect an “injury” and compensate for it would keep production moving.

Study authors argue that society needs robots which are more self-reliant and can either call for assistance or make up for drops in their own performance.

“We humans clearly have a notion of self,” explains study first author Boyuan Chen, now an assistant professor at Duke University. “Close your eyes and try to imagine how your own body would move if you were to take some action, such as stretch your arms forward or take a step backward. Somewhere inside our brain we have a notion of self, a self-model that informs us what volume of our immediate surroundings we occupy, and how that volume changes as we move.”

Should robots be self-aware?

Lipson admits that making robots self-aware is a controversial subject.

“Self-modeling is a primitive form of self-awareness,” the researcher says. “If a robot, animal, or human, has an accurate self-model, it can function better in the world, it can make better decisions, and it has an evolutionary advantage.”

Despite the risks of giving machines more autonomy, Lipson contends that the amount of self-awareness this particular robot has is “trivial compared to that of humans, but you have to start somewhere. We have to go slowly and carefully, so we can reap the benefits while minimizing the risks.”

The study is published in the journal Science Robotics.