(© Tierney - stock.adobe.com)

CAMBRIDGE, United Kingdom — More and more companies are using AI-powered recruitment tools that claim to eliminate bias and increase workplace diversity. These tools are designed to process large volumes of job applicants and utilize algorithms to assess personality traits, speech patterns, and facial expressions, but do they really work? Researchers from the University of Cambridge suggest these claims are misleading and potentially dangerous. They liken some AI tools to “automated pseudoscience” reminiscent of outdated beliefs like physiognomy and phrenology.

“We are concerned that some vendors are wrapping ‘snake oil’ products in a shiny package and selling them to unsuspecting customers,” says co-author Dr. Eleanor Drage in a university release. “By claiming that racism, sexism, and other forms of discrimination can be stripped away from the hiring process using artificial intelligence, these companies reduce race and gender down to insignificant data points, rather than systems of power that shape how we move through the world.”

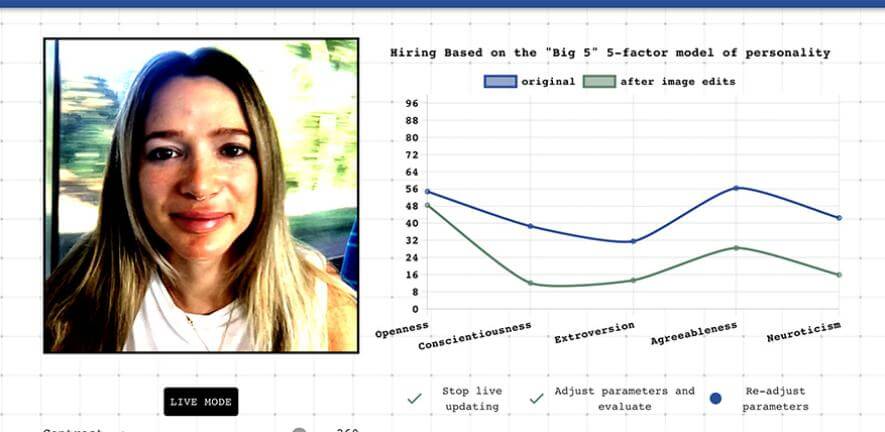

One particular area of concern is the use of personality analysis in AI recruitment tools. These tools claim to assess the “ideal candidate” by analyzing vocabulary, speech patterns, and facial micro-expressions. However, the Cambridge researchers, in collaboration with computer science undergraduates, demonstrated how random changes in factors such as clothing, lighting, and background could lead to drastically different personality readings. This suggests that these tools may be unreliable and subject to arbitrary judgments that could unfairly impact job seekers.

Using AI recruitment tools could potentially lead to a lack of diversity in the workforce instead of promoting it. The technology is calibrated to search for the employer’s ideal candidate, which may inadvertently reinforce existing biases and preferences. Candidates who replicate the desired behaviors and attitudes identified by the AI algorithms may have an advantage, perpetuating a homogenous work environment. Furthermore, as these algorithms rely on past data to make predictions, they may favor candidates who resemble the current workforce, further limiting diversity.

The researchers argue that relying solely on AI tools to address diversity issues in the workplace is a form of “technosolutionism.” Actual progress requires investment and changes to company culture rather than quick fixes provided by technology. While AI may have a role to play in recruitment, it should not be seen as a panacea for deep-rooted discrimination problems. To achieve genuine diversity, researchers say organizations must commit to fostering inclusive environments and implementing fair hiring practices that go beyond surface-level data analysis.

Another concern raised by the team is the lack of regulation and accountability surrounding AI recruitment tools. Many of these tools are proprietary and the inner workings are not publicized. This lack of transparency makes it difficult to assess potential biases and ensures that companies can market their products without sufficient scrutiny. The researchers call for more regulation and transparency to prevent these tools from becoming sources of misinformation and perpetuating unfair hiring practices.

While the use of AI in recruitment is on the rise, it is essential to evaluate its impact on workplace diversity critically. AI recruitment tools should not be seen as a standalone solution, but rather as a complement to comprehensive efforts to create inclusive and fair hiring practices, according to the study authors. Transparency, regulation, and ongoing research are crucial to ensure that these tools do not perpetuate biases and genuinely contribute to fostering diverse work environments.

The study is published in the journal Philosophy and Technology.

If you want a most efficient workforce, meritocracy should be the guiding principle and diversity should not enter into the equation. Diversity is a societal construct that has no real application to productivity and successful management and oversight of a profitable business. Businesses are not anthropological experiment labs but are responsible, firstly, to their fiduciary duties to their shareholders.