Quantum computer programmer writing code for quantum algorithms (© Microgen - stock.adobe.com)

NEW YORK — Could quantum computers be overhyped and unnecessary? New York University scientists have discovered a way to enhance classical computing to perform calculations faster and more accurately than the most advanced quantum computers. Simply put, regular PCs and laptops that run on binary computing (1s and 0s) could match or even outperform their supercomputing counterparts of the future.

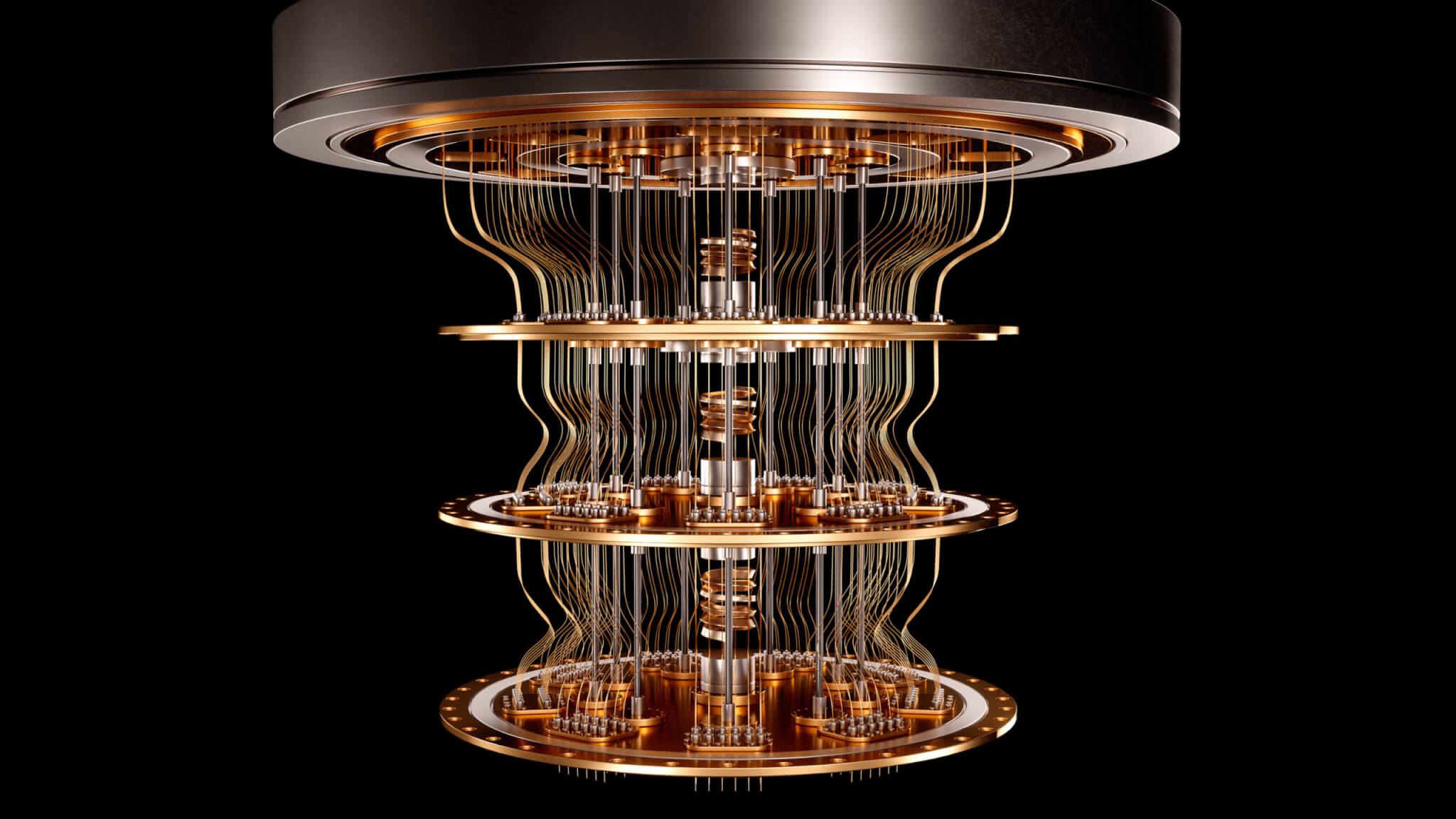

This seminal research challenges the long-held belief that quantum computing will inevitably surpass classical methods in terms of speed and efficiency. Quantum computing has been celebrated for its potential to revolutionize how we process information, using quantum bits, or qubits, which unlike the binary bits (0s and 1s) used in conventional computing, can hold values anywhere between 0 and 1.

This ability allows quantum computers to process a vast amount of information simultaneously, promising breakthroughs in fields ranging from cryptography to material science. However, quantum technology faces significant hurdles, such as information loss and the challenge of translating quantum information into a form that can yield useful computations.

The recent study presents a novel approach that could tip the scales back in favor of classical computing. By developing an algorithm that strategically retains only the essential parts of the information stored in a quantum state, the team has demonstrated that classical computers can, under certain conditions, outperform quantum ones.

“This work shows that there are many potential routes to improving computations, encompassing both classical and quantum approaches,” says study author Dries Sels, an assistant professor in NYU’s Department of Physics, in a university release. “Moreover, our work highlights how difficult it is to achieve quantum advantage with an error-prone quantum computer.”

This research focuses on a specific type of tensor network that accurately represents the interactions between qubits. Tensor networks, though complex, are essential for understanding the quantum state of a system. The team’s breakthrough came from applying optimization tools from statistical inference to these networks, making it possible to handle them more efficiently than ever before.

Joseph Tindall of the Flatiron Institute, who spearheaded the project, likens the algorithm’s operation to the process of compressing an image into a JPEG file. This analogy helps explain how the algorithm manages to store significant amounts of information using less space, akin to image compression techniques that reduce file size with minimal quality loss.

“Choosing different structures for the tensor network corresponds to choosing different forms of compression, like different formats for your image,” explains Tindall. “We are successfully developing tools for working with a wide range of different tensor networks. This work reflects that, and we are confident that we will soon be raising the bar for quantum computing even further.”

The implications of this research are profound, potentially delaying the onset of the quantum computing era by showing that classical methods are still very much in the game. By refining classical algorithms to mimic quantum computing processes, the researchers have opened up new avenues for computational improvement that leverage the stability and reliability of classical computers.

As the team continues to refine their methods and develop tools for managing complex tensor networks, they are optimistic about further advancing the capabilities of classical computing. This work not only challenges the current understanding of computational hierarchies but also emphasizes the importance of exploring all avenues for technological advancement, whether quantum or classical.

The study is published in the journal PRX Quantum.

Gaming is the way to find out how regular computers can match supercomputers.