(© M.Dörr & M.Frommherz - stock.adobe.com)

PITTSBURGH — Robots could soon be tidying up our messy bedrooms, just like on “The Jetsons.” Scientists at Carnegie Mellon University are teaching machines how to carry out everyday household chores the same way plenty of people learn these life skills — by watching “how-to” videos.

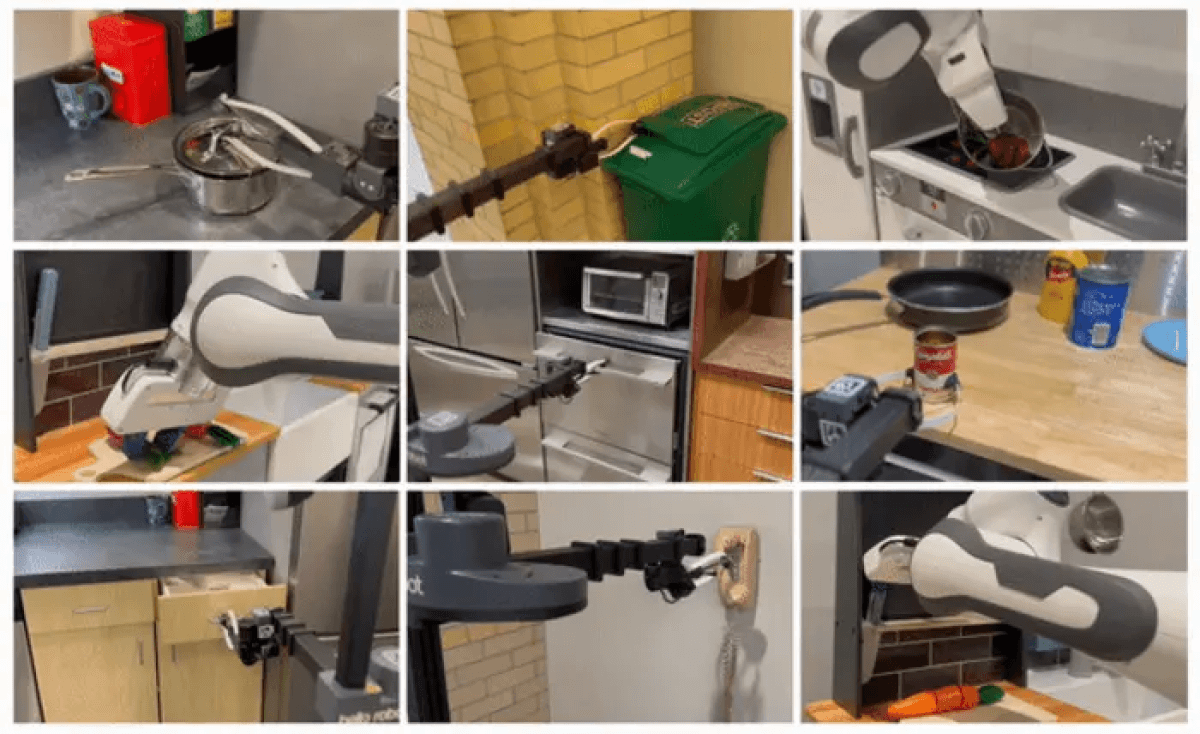

The team says their work is a major step towards artificial intelligence (AI) assisting humans with everything from cooking to cleaning. Two machines successfully learned 12 jobs including opening drawers, oven doors, and lids. The robots also took a pot off the stove, picked up a vegetable and can of soup, and answered the telephone.

“The robot can learn where and how humans interact with different objects through watching videos,” says Deepak Pathak, an assistant professor in the Robotics Institute at CMU’s School of Computer Science. “From this knowledge, we can train a model that enables two robots to complete similar tasks in varied environments.”

Current methods of training robots require either the manual demonstration of tasks by humans or extensive training in a simulated environment. Both can be time consuming and prone to failure. The CMU team previously demonstrated a novel method in which robots learn from observing humans complete these simple tasks. However, WHIRL, short for In-the-Wild Human Imitating Robot Learning, required human participants to complete the task in the same environment as the robot.

Now, Vision-Robotics Bridge (VRB) builds on and improves WHIRL. It eliminates the necessity of human demonstrations as well as the need for the robot to operate within an identical environment. Like WHIRL, the robot still requires practice to master a task. Experiments revealed that robots can learn a new task in as little as 25 minutes.

???? Robotics often faces a chicken and egg problem: no web-scale robot data for training (unlike CV or NLP) b/c robots aren’t deployed yet & vice-versa.

Introducing VRB: Use large-scale human videos to train a *general-purpose* affordance model to jumpstart any robotics paradigm! pic.twitter.com/csbvsfswuG

— Deepak Pathak (@pathak2206) June 13, 2023

“We were able to take robots around campus and do all sorts of tasks,” says Shikhar Bahl, a Ph.D. student in robotics. in a university release. “Robots can use this model to curiously explore the world around them. Instead of just flailing its arms, a robot can be more direct with how it interacts.”

To teach the robot how to interact with an object, the team applied the concept of affordances. Affordances have their roots in psychology and refer to what an environment offers an individual. The concept has been extended to design and human-computer interaction to refer to potential actions perceived by an individual.

VRB interacts with an object based on human behavior. For example, as a robot watches a video of a human opening a drawer, it identifies the contact points — the handle — and the direction of the drawer’s movement — straight out from the starting location. After watching several videos of humans opening drawers, the robot can determine how to open any drawer.

The team used videos from large datasets such as Ego4D and Epic Kitchens. Ego4D has nearly 4,000 hours of egocentric videos of daily activities from across the world. Epic Kitchens features similar videos capturing cooking, cleaning, and other kitchen tasks. Both datasets are intended to help train computer vision models.

“We are using these datasets in a new and different way,” Bahl concludes. “This work could enable robots to learn from the vast amount of internet and YouTube videos available.”

The team presented VRB at the Conference on Vision and Pattern Recognition in Vancouver.

You might also be interested in:

- Helping hand? Scientists constructing a robotic third arm to assist with chores

- Call the robot! AI experts say many chores will be automated by 2033

- ‘An oppressive society’: Watch this humanoid robot reveal ‘nightmare scenario’ about AI taking over world

South West News Service writer Mark Waghorn contributed to this report.